By Dr. Barna Szabó

Engineering Software Research and Development, Inc.

St. Louis, Missouri USA

The idea of a digital twin originated at NASA in the 1960s as a “living model” of the Apollo program. When Apollo 13 experienced an oxygen tank explosion, NASA utilized multiple simulators and extended a physical model of the spacecraft to include digital simulations, creating a digital twin. This twin was used to analyze the events leading up to the accident and investigate ideas for a solution. The term “digital twin” was coined by NASA engineer John Vickers much later. While the term is commonly associated with modeling physical objects, it is also employed to represent organizational processes. Here, we consider digital twins of physical entities only.

Digital Twins: An Overview

An overview of the current understanding of the idea of digital twins at NASA is available in a keynote presentation delivered in 2021 [1]. This presentation contains the following quote from reference [2]:

“The Digital Twin (DT) is a set of virtual information constructs that fully describes a potential or actual physical manufactured product from the micro atomic level to the macro geometrical level. At its optimum, any information that could be obtained from inspecting a physical manufactured product can be obtained from its Digital Twin.”

I think that this is closer to being an aspirational statement than a functional definition of digital twins. On the positive side, this statement articulates that the reliability of the results of the simulation should be comparable to that of a physical experiment. Note that this is possible only when mathematical models are used within their domains of calibration [3]. On the negative side, the description of a product “from the micro atomic level to the macro geometrical level” is neither necessary nor feasible. The goal of a simulation project is not to describe a physical system from A to Z but rather to predict the quantities of interest, such as expected fatigue life, margins of safety, limit load, deformation, natural frequency, and the like. In view of this, I propose the following definition:

“A Digital Twin (DT) is a set of mathematical models formulated to predict quantities of interest that characterize the functioning of a potential or actual manufactured product. When the mathematical models are used within their domains of calibration, the reliability of the predictions is comparable to that of a physical experiment.”

The set of mathematical models may comprise a single model of a component or several interacting component models. The motivation for creating digital twins typically comes from the requirements of product lifecycle management: High-value assets are monitored throughout their lifecycles, and the models that constitute a digital twin are updated with new data as they become available. This fits into the framework of model development projects discussed in one of my blogs, “Model Development in the Engineering Sciences,” and in greater detail in reference [3]. An essential attribute of any mathematical model is its domain of calibration.

Example 1: Component Twin

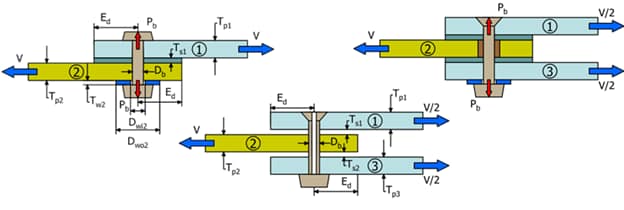

The Single Fastener Analysis Tool (SFAT) is a smart application engineered for comprehensive analyses of single and double shear joints of metal or composite plates. It also serves as an example of a component twin and highlights the technical challenges involved in the development of digital twins.

SFAT offers the flexibility to model laminates either as ply-by-ply or homogenized entities. It can accommodate various types of fastener heads, such as protruding and countersunk, including those with hollow shafts. It is capable of supporting different fits such as neat, interference, and clearance.

SFAT also provides additional input options to account for factors like shimmed and unshimmed gaps, bushings, and washers. The application allows for the specification of shear load and fastener pre-load as loading conditions. It provides estimates of the errors of approximation in terms of the quantities of interest.

Example 2: Asset Twin

A good example of asset twins is the structural health monitoring of large concrete dams. Following the collapse of the Malpasset dam in Provence, France, in 1959, the World Bank mandated that all dam projects seeking financial backing must undergo modeling and testing at the Experimental Institute for Models and Structures in Bergamo, Italy (ISMES). Subsequently, ISMES was commissioned to develop a system that will monitor the structural health of large dams. The dams would be instrumented, and a numerical simulation framework, now called digital twin, would be used to evaluate anomalies indicated by the instruments.

It was understood that numerical approximation errors would have to be controlled to small tolerances to ensure that they were negligibly small in comparison with the errors in measurements. To perform the calculations, a finite element program based on the p-version was written at ISMES in the second half of the 1970s under the direction of Dr. Alberto Peano, my former D.Sc. student. That program is still in use today under the name FIESTA [4].

Simulation Governance: Essential for Digital Twin Creation

Creating digital twins encompasses all aspects of model development, necessitating separate treatment of the model form and approximation errors. In other words, the verification, validation, and uncertainty quantification (VVUQ) procedures have to be applied. The model must be updated and recalibrated when new ideas are proposed or new data become available. The only difference is that in the case of digital twins, the updates involve individual object-specific data collected over the life span of the physical object.

Model development projects are classified as progressive, stagnant, and improper. A model development project is progressive if the domain of calibration is increasing, stagnant if it is not increasing, and improper if the problem-solving machinery is not consistent with the formulation of the mathematical model or lacks the ability to support solution verification [3]. The goal of simulation governance is to ensure that digital twin projects are progressive. Unfortunately, owing to a lack of simulation governance, the large majority of model development projects are improper, and hence, most digital twins fail to meet the required standards of reliability.

References

[1] Allen, D. B. Digital Twins and Living Models at NASA. Keynote presentation at the ASME Digital Twin Summit. November 3, 2021. [2] Grieves, M. and Vickers, J. Digital Twin: Mitigating Unpredictable, Undesirable Emergent Behavior in Complex Systems. In: Transdisciplinary Perspectives on Complex Systems. F-J. Kahlen, S. Flumerfelt and A. Alves (eds) Springer International Publishing, Switzerland, pp. 85-113, 2017. [3] Szabó, B. and Actis, R. The demarcation problem in the applied sciences. Computers and Mathematics with Applications. 162 pp. 206–214, 2024. The publisher is providing free access to this article until May 22, 2024. Anyone may download it without registration or fees by clicking on this link: https://authors.elsevier.com/c/1isOB3CDPQAe0b [4] Angeloni, P., Boccellato, R., Bonacina, E., Pasini, A., Peano, A. Accuracy Assessment by Finite Element P-Version Software. In: Adey, R.A. (ed) Engineering Software IV. Springer, Berlin, Heidelberg, 1985. https://doi.org/10.1007/978-3-662-21877-8_24Related Blogs:

- Where Do You Get the Courage to Sign the Blueprint?

- A Memo from the 5th Century BC

- Obstacles to Progress

- Why Finite Element Modeling is Not Numerical Simulation?

- XAI Will Force Clear Thinking About the Nature of Mathematical Models

- The Story of the P-version in a Nutshell

- Why Worry About Singularities?

- Questions About Singularities

- A Low-Hanging Fruit: Smart Engineering Simulation Applications

- The Demarcation Problem in the Engineering Sciences

- Model Development in the Engineering Sciences

- Certification by Analysis (CbA) – Are We There Yet?

- Not All Models Are Wrong

Serving the Numerical Simulation community since 1989

Serving the Numerical Simulation community since 1989

Leave a Reply

We appreciate your feedback!

You must be logged in to post a comment.